20th March 2021

The only possible system architectures for complex control systems: the instruction architecture for computers and the recommendation architecture for learning systems like brains

The only possible system architectures for complex control systems: the instruction architecture for computers and the recommendation architecture for learning systems like brains

Electronic computing systems perform a huge number of different types of tasks. A personal computer can carry out user applications like browsing the internet; handling email; creating, editing and storing text, graphics and pictures; playing a wide range of games and so on. Other computers manage the internet, and control a wide range of physical systems like manufacturing lines, flight simulators or actual aircraft.

Nevertheless, all these computing systems have the same high level architecture, called the instruction architecture. There is always a CPU specializing in performing instructions, various memories specializing in reading and writing data at different speeds, and various input/output modules, all connected together by a common bus.

Why is this instruction architecture so ubiquitous across computing systems performing such different tasks?

In the human brain there are major structures like the cortex, the hippocampus, the thalamus, the basal ganglia, the basal forebrain, the amygdala, the hypothalamus and the cerebellum. All mammals have the same structures in their brains, and corresponding structures are seen in birds, reptiles, amphibians and fish. The invertebrate with the most developed brain is the squid, and even in squids there are structures with similarities to the cortex, hippocampus, thalamus, basal ganglia, cerebellum, and hypothalamus.

Why do brains with very different environmental challenges and evolutionary history have very similar structural components?

MODULAR HIERARCHIES

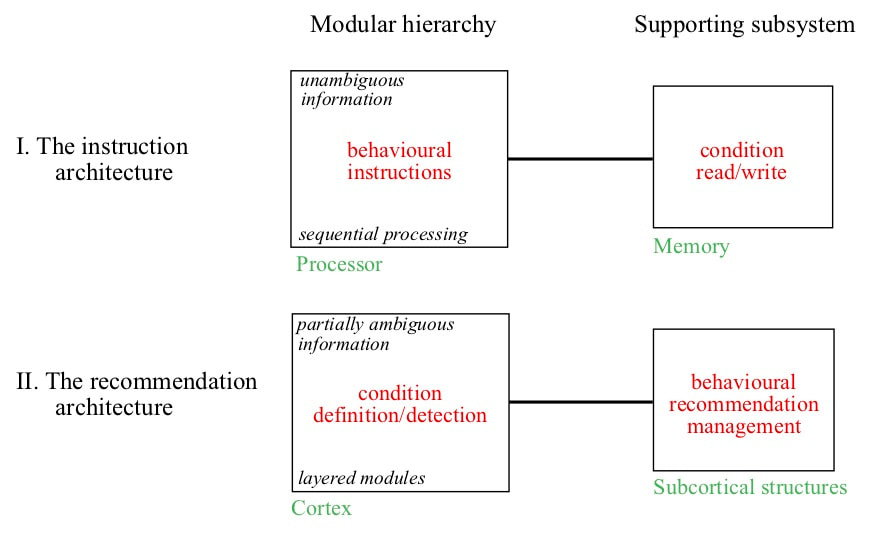

As I discussed in earlier blogs, there are a number of practical considerations that tend to constrain complex control systems like computers and brains into specific architectural forms. Such control systems must detect conditions within the information available to them, and associate different combinations of conditions with different behaviours. The need to conserve information processing resources tends to constrain those resources into modular hierarchies. The need to modify some features while avoiding undesirable side effects means that information exchanges between modules must be minimized as far as possible. The most resource efficient meaning of a condition detection is an instruction or command, and this information meaning is used in computers. Because of these unambiguous behavioural meanings, design changes have to be followed by extensive regression testing to detect any undesirable side effects of the changes. If behaviours must be learned it is impractical to use such unambiguous meanings. A condition detection can only be interpreted as a range of recommendations in favour of many different behaviours, and the behaviour with the largest weight across all currently detected conditions is selected. Consequence feedback cannot be used to define conditions because of the extreme level of undesirable side effects that would be introduced. Hence there must be two separate subsystems in a complex learning system. One system is a modular hierarchy of condition definition and detection. The other subsystem associates condition detections with recommendation weights.

NEED FOR SYNCHRONIZATION OF DATA

In this blog I will discuss the effects of an additional practical consideration. This is the need for something called synchronicity. This consideration is in some ways an aspect of resource limitation, but the constraints it places on system architecture are so significant that I will consider it separately.

What do I mean by synchronicity? Control systems need to process information derived from multiple input states. Often the same resources can process the different states, but it is not possible to wait until the processing of one input state has completed before starting on the next input state. To give an example, suppose you are downloading two different web pages to your computer at the same time. Information about a page arrives in the form of large numbers of small packets of data that may have travelled over different routes to your computer and arrive randomly distributed in time. Each packet must be processed to check that it was not corrupted in transit and to extract its data. This processing is very similar for every packet and will be performed on much the same hardware. The synchronicity requirement is that the packets for both web pages be processed on the same hardware without confusing the separate information.

Nevertheless, all these computing systems have the same high level architecture, called the instruction architecture. There is always a CPU specializing in performing instructions, various memories specializing in reading and writing data at different speeds, and various input/output modules, all connected together by a common bus.

Why is this instruction architecture so ubiquitous across computing systems performing such different tasks?

In the human brain there are major structures like the cortex, the hippocampus, the thalamus, the basal ganglia, the basal forebrain, the amygdala, the hypothalamus and the cerebellum. All mammals have the same structures in their brains, and corresponding structures are seen in birds, reptiles, amphibians and fish. The invertebrate with the most developed brain is the squid, and even in squids there are structures with similarities to the cortex, hippocampus, thalamus, basal ganglia, cerebellum, and hypothalamus.

Why do brains with very different environmental challenges and evolutionary history have very similar structural components?

MODULAR HIERARCHIES

As I discussed in earlier blogs, there are a number of practical considerations that tend to constrain complex control systems like computers and brains into specific architectural forms. Such control systems must detect conditions within the information available to them, and associate different combinations of conditions with different behaviours. The need to conserve information processing resources tends to constrain those resources into modular hierarchies. The need to modify some features while avoiding undesirable side effects means that information exchanges between modules must be minimized as far as possible. The most resource efficient meaning of a condition detection is an instruction or command, and this information meaning is used in computers. Because of these unambiguous behavioural meanings, design changes have to be followed by extensive regression testing to detect any undesirable side effects of the changes. If behaviours must be learned it is impractical to use such unambiguous meanings. A condition detection can only be interpreted as a range of recommendations in favour of many different behaviours, and the behaviour with the largest weight across all currently detected conditions is selected. Consequence feedback cannot be used to define conditions because of the extreme level of undesirable side effects that would be introduced. Hence there must be two separate subsystems in a complex learning system. One system is a modular hierarchy of condition definition and detection. The other subsystem associates condition detections with recommendation weights.

NEED FOR SYNCHRONIZATION OF DATA

In this blog I will discuss the effects of an additional practical consideration. This is the need for something called synchronicity. This consideration is in some ways an aspect of resource limitation, but the constraints it places on system architecture are so significant that I will consider it separately.

What do I mean by synchronicity? Control systems need to process information derived from multiple input states. Often the same resources can process the different states, but it is not possible to wait until the processing of one input state has completed before starting on the next input state. To give an example, suppose you are downloading two different web pages to your computer at the same time. Information about a page arrives in the form of large numbers of small packets of data that may have travelled over different routes to your computer and arrive randomly distributed in time. Each packet must be processed to check that it was not corrupted in transit and to extract its data. This processing is very similar for every packet and will be performed on much the same hardware. The synchronicity requirement is that the packets for both web pages be processed on the same hardware without confusing the separate information.

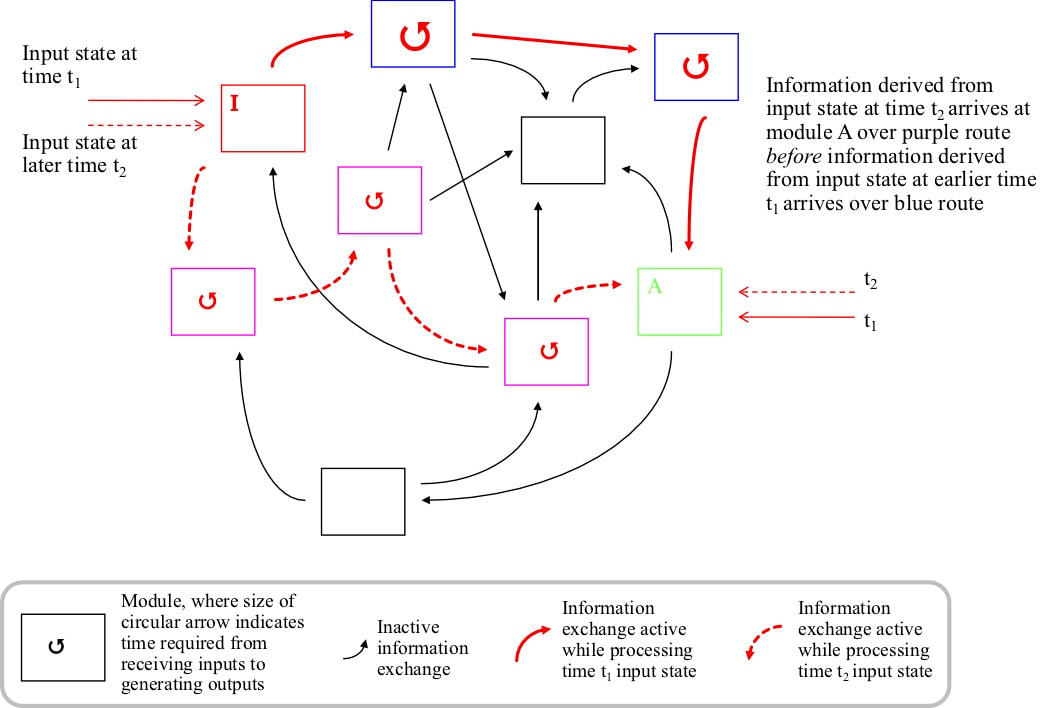

The big problem for synchronicity is created by the combination of module processing times and information exchange between modules. Because of such exchanges, information can pass between two modules by many different routes. The simplest is directly between the two modules, but exchange can also occur via one or multiple intermediate modules. Each module may take a different amount of time to process information. Suppose that one module gets an input directly from the external environment. Because of information exchange, another module gets processed information about that input by many routes. The intervening modules take differing amounts of time to process information. Hence information about an environmental input at one point in time will reach that other module scattered in time. Depending on the different processing speeds of the intervening modules, some of the information about an environmental input at one point in time may arrive after information derived from a later time. A receiving module must have some way to ensure that it only processes consistent sets of information. This is the synchronicity problem.

Brains have exactly the same problem. If a brain looks at some object in the environment, there will generally not be enough time time to wait until the information about that object has been completely processed to look at another object. Processing of both objects must overlap, but must not be confused. If a person looks at a cat, a bird and a tree in quick succession, they must not perceive a cat with wings or a bird with leaves.

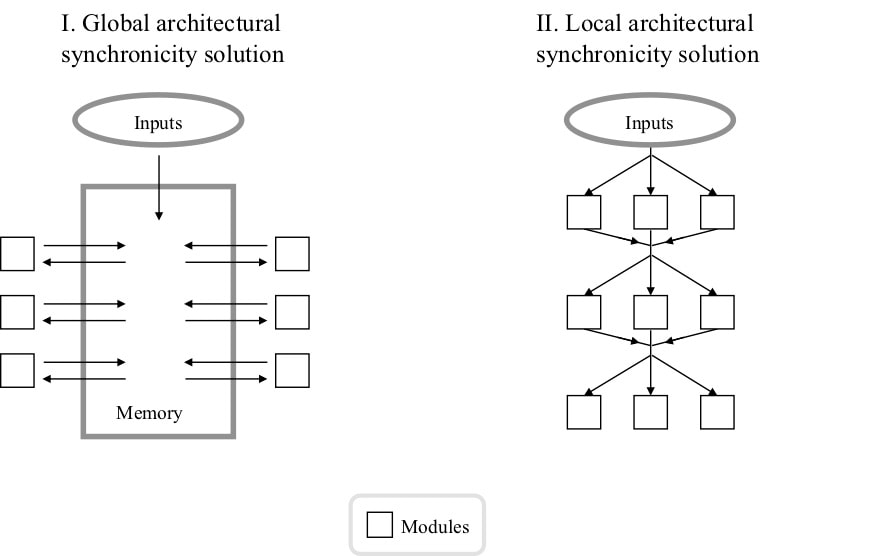

There are two ways the problem can be solved, called global and local. In the global solution, modules do not pass information directly. Rather, module outputs are recorded in a reference memory. These records include a tag indicating the input state from which the output derived. Other modules get their inputs from this memory, and only process inputs together if they have the same tag. An implication of this approach is that a module must wait to begin processing until all the necessary information is in the memory. In the global approach there is therefore a specific sequence in which information processing must occur. This sequential processing is characteristic of computer systems.

Brains have exactly the same problem. If a brain looks at some object in the environment, there will generally not be enough time time to wait until the information about that object has been completely processed to look at another object. Processing of both objects must overlap, but must not be confused. If a person looks at a cat, a bird and a tree in quick succession, they must not perceive a cat with wings or a bird with leaves.

There are two ways the problem can be solved, called global and local. In the global solution, modules do not pass information directly. Rather, module outputs are recorded in a reference memory. These records include a tag indicating the input state from which the output derived. Other modules get their inputs from this memory, and only process inputs together if they have the same tag. An implication of this approach is that a module must wait to begin processing until all the necessary information is in the memory. In the global approach there is therefore a specific sequence in which information processing must occur. This sequential processing is characteristic of computer systems.

In the alternative local approach to synchronicity, modules are arranged in a sequence of layers. Modules in one layer only get information from the preceding layer, and only pass information to the next layer. Every module in a layer has to take the same amount of time to process inputs. Layering avoids the need for a reference memory. However, it is likely that some information will need to be exchanged outside the strict layer to layer arrangement, and it is unlikely that all the modules in one layer will always take exactly the same amount of time. Some errors are therefore almost certain. If, as in electronic systems, every information exchange is an unambiguous behavioural command, such errors cannot be tolerated. However, if information exchanges are partially ambiguous behavioural recommendations, a small degree of error is tolerable and the resource cost of the reference memory can be avoided.

Layering with most connectivity between specific layers is a very pronounced feature of the cortex. The cortex is a thin sheet of neurons divided up into about 150 areas in each hemisphere. In every area there are four or five layers of pyramidal neurons, numbered by Roman numerals II to VI (layer I contains very few neuron cell bodies). Most of the connectivity within an area goes between specific layers. Inputs generally arrive in layer IV. Outputs from layer IV go to layers II and III. Outputs from layers II/III go to layers V and VI. Outputs from VI go to layer IV in other areas.

ONLY TWO POSSIBLE HIGH LEVEL ARCHITECTURES FOR COMPLEX CONTROL SYSTEMS

A complex control system therefore must have system must have one of just two possible high level architectures. In the instruction architecture conditions are specified by a designer and are unambiguous in behavioural terms. There is a modular hierarchy of resources that detects the presence of conditions and generates the corresponding instructions, and a reference memory subsystem. In the recommendation architecture, conditions are defined heuristically and are partially ambiguous in behavioural terms. There is a modular hierarchy of resources that defines and detects conditions, and a subsystem that interprets each condition detection as a range of recommendations in favour of many different behaviours. At each point in time this subsystem determines and implements the most strongly recommended behaviour. Consequence feedback can change the recommendation strengths of recent behaviours, but cannot be used to directly change condition definitions.

Layering with most connectivity between specific layers is a very pronounced feature of the cortex. The cortex is a thin sheet of neurons divided up into about 150 areas in each hemisphere. In every area there are four or five layers of pyramidal neurons, numbered by Roman numerals II to VI (layer I contains very few neuron cell bodies). Most of the connectivity within an area goes between specific layers. Inputs generally arrive in layer IV. Outputs from layer IV go to layers II and III. Outputs from layers II/III go to layers V and VI. Outputs from VI go to layer IV in other areas.

ONLY TWO POSSIBLE HIGH LEVEL ARCHITECTURES FOR COMPLEX CONTROL SYSTEMS

A complex control system therefore must have system must have one of just two possible high level architectures. In the instruction architecture conditions are specified by a designer and are unambiguous in behavioural terms. There is a modular hierarchy of resources that detects the presence of conditions and generates the corresponding instructions, and a reference memory subsystem. In the recommendation architecture, conditions are defined heuristically and are partially ambiguous in behavioural terms. There is a modular hierarchy of resources that defines and detects conditions, and a subsystem that interprets each condition detection as a range of recommendations in favour of many different behaviours. At each point in time this subsystem determines and implements the most strongly recommended behaviour. Consequence feedback can change the recommendation strengths of recent behaviours, but cannot be used to directly change condition definitions.

Computers always have the instruction architecture because it is the most resource efficient solution for a complex control system. Brains always have the recommendation architecture because it is the most resource efficient if behaviours have to be learned.