10th March 2021

The impact of the need to change functionality on the architectures of brains and computers

The impact of the need to change functionality on the architectures of brains and computers

Both computer systems and brains have to change the way they function over time. In the case of computers, feature changes are made by human designers. In the case of brains, behaviour changes are made heuristically through experience, a process we call learning. In this blog I will discuss different ways in which the need to make changes impacts the two types of system.

BRAINS AND COMPUTERS ARE CONTROL SYSTEMS

Both brains and computers are control systems. They get information about their environment and about their own state. They process this information to detect specific circumstances, or conditions, and in response to conditions perform appropriate behaviours. For example, suppose that when a document is being typed, a computer gets information that the shift key and the A key have been pressed at the same time. It also has information that a specific document is open in a window on the screen, the cursor is at a specific location in the document, and a particular font is associated with that location. The combination of all these information conditions is associated with the behaviour of displaying a particular form of the letter "A" at a specific location on the screen.

BRAINS AND COMPUTERS ARE CONTROL SYSTEMS

Both brains and computers are control systems. They get information about their environment and about their own state. They process this information to detect specific circumstances, or conditions, and in response to conditions perform appropriate behaviours. For example, suppose that when a document is being typed, a computer gets information that the shift key and the A key have been pressed at the same time. It also has information that a specific document is open in a window on the screen, the cursor is at a specific location in the document, and a particular font is associated with that location. The combination of all these information conditions is associated with the behaviour of displaying a particular form of the letter "A" at a specific location on the screen.

When a finger touches a hot stove, the brain is getting information about pain signals coming from the finger, visual information about the stove and its position relative to the body, and propiceptic information about the position of the body and the state of muscles. The combination of all these information conditions is associated with the behaviour of rapidly moving the finger away from the stove.

Of course, in a computer system the conditions, behaviours and associations are all specified by a designer. For the brain, the conditions, many of the behaviours, and the associations are all defined heuristically.

TWO TYPES OF ASSOCIATION BETWEEN CONDITIONS AND BEHAVIOURS

When a condition is detected, it limits the range of system behaviours that could be appropriate. For example, if the A key is pressed on a computer keyboard while editing a document, the range of possible responses is limited. If it is pressed alone, the letter "a" is displayed. If the shift key is pressed at the same time, the letter "A" is displayed. If the command key is pressed at the same time, all the content of the document is highlighted. There are a few other options, but a wide range of behaviours such as displaying any letter in the alphabet other than A, or displaying a number, are unambiguously ruled out.

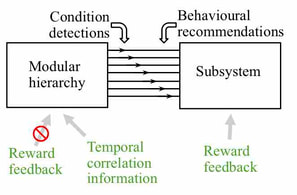

This illustrates the general situation in computer systems. If a condition is detected, it indicates with 100% confidence that the appropriate behaviour is one of a small group of possible behaviours. Small combinations of conditions are associated with 100% confidence that one specific behaviour is appropriate. In other words, such a combination generates an instruction to perform the behaviour.

Of course, in a computer system the conditions, behaviours and associations are all specified by a designer. For the brain, the conditions, many of the behaviours, and the associations are all defined heuristically.

TWO TYPES OF ASSOCIATION BETWEEN CONDITIONS AND BEHAVIOURS

When a condition is detected, it limits the range of system behaviours that could be appropriate. For example, if the A key is pressed on a computer keyboard while editing a document, the range of possible responses is limited. If it is pressed alone, the letter "a" is displayed. If the shift key is pressed at the same time, the letter "A" is displayed. If the command key is pressed at the same time, all the content of the document is highlighted. There are a few other options, but a wide range of behaviours such as displaying any letter in the alphabet other than A, or displaying a number, are unambiguously ruled out.

This illustrates the general situation in computer systems. If a condition is detected, it indicates with 100% confidence that the appropriate behaviour is one of a small group of possible behaviours. Small combinations of conditions are associated with 100% confidence that one specific behaviour is appropriate. In other words, such a combination generates an instruction to perform the behaviour.

However, an alternative type of association is also possible. In this alternative, detection of a condition indicates that there is a small probability that any one of a large number of different behaviours is appropriate, a different probability for each of the behaviours. These probabilities can be viewed as recommendation strengths in favour of the behaviours. In other words, condition detections are partially ambiguous, because detection of conditions or groups of conditions only ever generate recommendations. In this situation, the appropriate behaviour is the one with the largest total recommendation strength across all currently detected conditions.

This alternative requires the definition of far more conditions than if condition detections are behaviourally unambiguous, and therefore far more resources. However, as we will see in the next section, it has huge advantages if behaviours are defined heuristically.

IMPACT OF THE NEED TO MAKE MODIFICATIONS ON COMPUTER ARCHITECTURE

There is always a need to make changes to the features of computer systems. A perennial problem with such changes is that they tend to introduce undesirable side effects on other features. As a result, following any such design changes a system is extensively tested before release to customers, and even then some problems or "bugs" always seem to escape the testing scrutiny. Why is making changes such a problem?

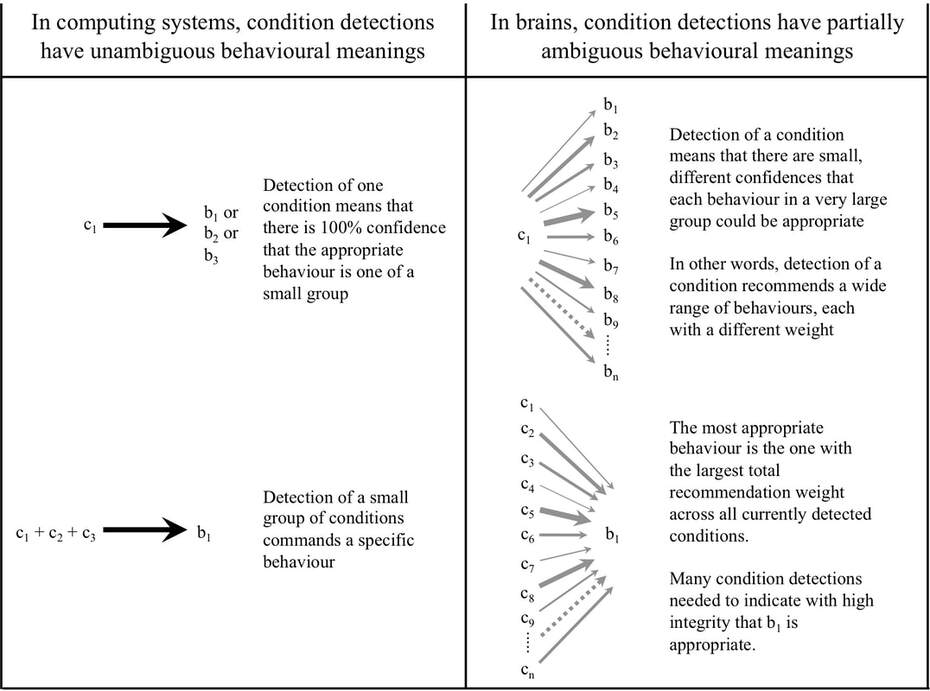

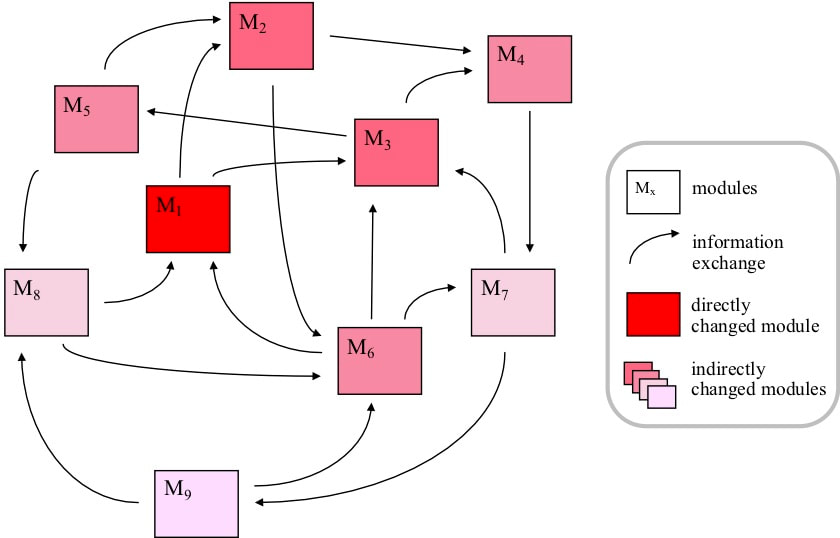

In the last blog we talked about how computer resources are organized into modules. Even though information exchange between modules is minimized as far as possible, there will always be significant exchanges. We also discussed why to conserve resources any one module supports many different features, and any one feature needs processes performed by many modules. Think about the problem this presents if some feature change is needed. All the processes needed by a feature are performed by modules. So to change a feature, at least one module must be changed. The first problem is that if a module is changed to support a change to one feature, there is a risk of undesirable side effects on all the other features also supported by the module. The second problem is that a change to one module could affect the information that module passes to other modules. The changes to that information could affect the features supported by those other modules and the information passed by those modules to yet other modules. So there can be an expanding wave of side effects. Of course, the smaller the amount of information exchange between modules, the lower the risk of side effects, so a fair amount of effort goes into limiting and managing such exchanges.

You might ask, doesn't software make this problem easier? It is certainly easier to make changes in software, but software is also a resource forced into a modular hierarchy, and the undesirable side effect problem remains the same. So whenever there is a design change, it is followed by regression testing that tries to verify that all the unchanged features still work as intended.

This alternative requires the definition of far more conditions than if condition detections are behaviourally unambiguous, and therefore far more resources. However, as we will see in the next section, it has huge advantages if behaviours are defined heuristically.

IMPACT OF THE NEED TO MAKE MODIFICATIONS ON COMPUTER ARCHITECTURE

There is always a need to make changes to the features of computer systems. A perennial problem with such changes is that they tend to introduce undesirable side effects on other features. As a result, following any such design changes a system is extensively tested before release to customers, and even then some problems or "bugs" always seem to escape the testing scrutiny. Why is making changes such a problem?

In the last blog we talked about how computer resources are organized into modules. Even though information exchange between modules is minimized as far as possible, there will always be significant exchanges. We also discussed why to conserve resources any one module supports many different features, and any one feature needs processes performed by many modules. Think about the problem this presents if some feature change is needed. All the processes needed by a feature are performed by modules. So to change a feature, at least one module must be changed. The first problem is that if a module is changed to support a change to one feature, there is a risk of undesirable side effects on all the other features also supported by the module. The second problem is that a change to one module could affect the information that module passes to other modules. The changes to that information could affect the features supported by those other modules and the information passed by those modules to yet other modules. So there can be an expanding wave of side effects. Of course, the smaller the amount of information exchange between modules, the lower the risk of side effects, so a fair amount of effort goes into limiting and managing such exchanges.

You might ask, doesn't software make this problem easier? It is certainly easier to make changes in software, but software is also a resource forced into a modular hierarchy, and the undesirable side effect problem remains the same. So whenever there is a design change, it is followed by regression testing that tries to verify that all the unchanged features still work as intended.

IMPACT OF THE NEED TO LEARN ON BRAIN ARCHITECTURE

Artificial neural networks are an attempt to design learning systems, using approaches vaguely based on the brain. Such networks can learn to perform some tasks very effectively. However, one problem widely experienced with such networks is catastrophic interference. Once a network has been trained to perform one type of task effectively, if it is then trained to perform a different type of task the ability to perform the first task can be completely erased.

As discussed last week, the need to limit use of resources forces brain information processing resources into a modular hierarchy. Suppose that the brain needed to learn a new behaviour, and such learning could be achieved by changes to just one module. The problem is the same as for computer systems. Because every module supports many different behaviours, changes to a module to learn a new behaviour risks interfering with previously learned behaviours supported by processes performed by the same module. And information exchanges between modules means that undesirable interference with earlier learning can propagate across even more behaviours.

The extensive regression testing performed on computers following changes is not available to brains. The only feedback available is consequence feedback – information about whether the situation following a behaviour was favourable or unfavourable.

Consider the situation if the brain used unambiguous associations between conditions and behaviours in the manner of a computer. Suppose that to support new learning, an experimental change to a condition was made, and this change resulted in an effective new behaviour. Detection of positive consequences could then confirm the change. However, consequence feedback at the time of the new behaviour provides no information about the effect on previously learned behaviours. Each time a new behaviour is learned, some conditions change. Information exchanges between modules means that conditions in other modules are affected. Because conditions are associated with unambiguous commands, if any of the changed conditions were associated with previously learned behaviours then the behaviours would not be carried out when next needed. Some other behaviour might be selected, and consequence feedback would be negative. Such consequence feedback would indicate that a different behaviour was needed, but carries no information about what that behaviour should be. The previous learning has been lost.

Of course, if every behaviour had a completely separate set of conditions, then the interference would not occur. The problem is that such a solution would eliminate resource sharing between different behaviours.

Hence for a system like the brain that must learn many different behaviours while limiting the resources needed, unambiguous associations between conditions and behaviours are not feasible. However, consider the situation if these associations were partially ambiguous. A learned behaviour would be recommended by a wide range of conditions. Between when the behaviour was learned and when it was again appropriate, many new behaviours could have been learned, involving changes to some of these conditions. However, because the previously learned behaviour was recommended by many different conditions, there is a chance that it would still be the most strongly recommended when needed later. Favourable consequence feedback following the behaviour could then increase the recommendation strengths across the changed population of conditions.

Even if an incorrect behaviour was selected, it could sometimes be immediately corrected. Consequence feedback would reduce the recommendation weights of all the currently detected conditions in favour of the incorrect behaviour. This reduction could leave the previously learned behaviour as the new predominant recommendation.

Although learning of many different behaviours with limited resources is impractical if there are unambiguous associations between conditions and behaviours, it is feasible using partially ambiguous associations. However, although feasible, there will be a need for very careful management of changes to conditions. In particular, although consequence feedback is critical for determining recommendation weights, it is not useful to guide changes to conditions. The problem is that a condition is associated with a range of recommendation weights in favour of different behaviours. If consequence feedback following a behaviour was used to drive changes to some condition definitions, those changes could be good for the recent behaviour, but would damage the integrity of the recommendation weights in favour of all other behaviours. Hence the only type of information that can be used to define conditions is temporal correlations between past condition detections.

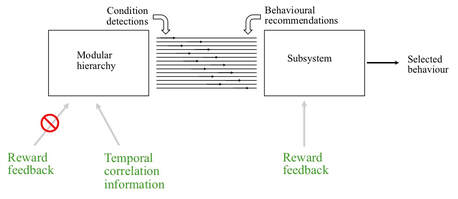

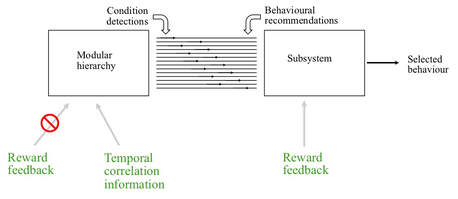

The implication is that in a complex learning system there must be a subsystem completely separate from the modular hierarchy that defines and detects conditions. This additional subsystem gets condition detections from the modular hierarchy, and defines the recommendation weights associated with each detection. Consequence feedback is available within this subsystem to change recommendation weights, but is not available in the modular hierarchy to change condition definitions.

Artificial neural networks are an attempt to design learning systems, using approaches vaguely based on the brain. Such networks can learn to perform some tasks very effectively. However, one problem widely experienced with such networks is catastrophic interference. Once a network has been trained to perform one type of task effectively, if it is then trained to perform a different type of task the ability to perform the first task can be completely erased.

As discussed last week, the need to limit use of resources forces brain information processing resources into a modular hierarchy. Suppose that the brain needed to learn a new behaviour, and such learning could be achieved by changes to just one module. The problem is the same as for computer systems. Because every module supports many different behaviours, changes to a module to learn a new behaviour risks interfering with previously learned behaviours supported by processes performed by the same module. And information exchanges between modules means that undesirable interference with earlier learning can propagate across even more behaviours.

The extensive regression testing performed on computers following changes is not available to brains. The only feedback available is consequence feedback – information about whether the situation following a behaviour was favourable or unfavourable.

Consider the situation if the brain used unambiguous associations between conditions and behaviours in the manner of a computer. Suppose that to support new learning, an experimental change to a condition was made, and this change resulted in an effective new behaviour. Detection of positive consequences could then confirm the change. However, consequence feedback at the time of the new behaviour provides no information about the effect on previously learned behaviours. Each time a new behaviour is learned, some conditions change. Information exchanges between modules means that conditions in other modules are affected. Because conditions are associated with unambiguous commands, if any of the changed conditions were associated with previously learned behaviours then the behaviours would not be carried out when next needed. Some other behaviour might be selected, and consequence feedback would be negative. Such consequence feedback would indicate that a different behaviour was needed, but carries no information about what that behaviour should be. The previous learning has been lost.

Of course, if every behaviour had a completely separate set of conditions, then the interference would not occur. The problem is that such a solution would eliminate resource sharing between different behaviours.

Hence for a system like the brain that must learn many different behaviours while limiting the resources needed, unambiguous associations between conditions and behaviours are not feasible. However, consider the situation if these associations were partially ambiguous. A learned behaviour would be recommended by a wide range of conditions. Between when the behaviour was learned and when it was again appropriate, many new behaviours could have been learned, involving changes to some of these conditions. However, because the previously learned behaviour was recommended by many different conditions, there is a chance that it would still be the most strongly recommended when needed later. Favourable consequence feedback following the behaviour could then increase the recommendation strengths across the changed population of conditions.

Even if an incorrect behaviour was selected, it could sometimes be immediately corrected. Consequence feedback would reduce the recommendation weights of all the currently detected conditions in favour of the incorrect behaviour. This reduction could leave the previously learned behaviour as the new predominant recommendation.

Although learning of many different behaviours with limited resources is impractical if there are unambiguous associations between conditions and behaviours, it is feasible using partially ambiguous associations. However, although feasible, there will be a need for very careful management of changes to conditions. In particular, although consequence feedback is critical for determining recommendation weights, it is not useful to guide changes to conditions. The problem is that a condition is associated with a range of recommendation weights in favour of different behaviours. If consequence feedback following a behaviour was used to drive changes to some condition definitions, those changes could be good for the recent behaviour, but would damage the integrity of the recommendation weights in favour of all other behaviours. Hence the only type of information that can be used to define conditions is temporal correlations between past condition detections.

The implication is that in a complex learning system there must be a subsystem completely separate from the modular hierarchy that defines and detects conditions. This additional subsystem gets condition detections from the modular hierarchy, and defines the recommendation weights associated with each detection. Consequence feedback is available within this subsystem to change recommendation weights, but is not available in the modular hierarchy to change condition definitions.

This separation between a modular hierarchy that defines and detects conditions and a subsystem that associates condition detections with behaviours is called the recommendation architecture.